Biocuration 2012

Great meeting: Biocuration 2012, Georgetown University, DC. When I leave a meeting with my head exploding with new ideas and a need to try them all out at once, I know I got my money’s worth, and then some. Even a three hour flight delay followed by discovering my car with a dead battery at 1am at the deserted Dayton Airport parking lot did not dampen my enthusiasm upon return. I will make sure my dome light is off before I leave my car the next time though. To follow are bits and pieces from the meeting I enjoyed. I’m doing this mostly from memory, two days later, so I may have an addendum once I get my notes together.

What is biocuration? Well, anything that has to do with annotating, labeling, indexing, identifying biological entities. Almost exclusively genes in this conference. Genome databases, especially those of model organisms, employ curators to annotate, check and re-annotate the genomic data Here’s a more elaborate explanation, taken from the website of the International Society for Biocuration:

Biocuration involves the translation and integration of information relevant to biology into a database or resource that enables integration of the scientific literature as well as large data sets. Accurate and comprehensive representation of biological knowledge, as well as easy access to this data for working scientists and a basis for computational analysis, are primary goals of biocuration.

The goals of biocuration are achieved thanks to the convergent endeavors of biocurators, software developers and researchers in bioinformatics. Biocurators provide essential resources to the biological community such that databases have become an integral part of the tools researchers use on a daily basis for their work.

Day 1 started off with many community annotation tools. I thought that the Wikipedia model for annotation was dead, but maybe I’m wrong. Many community efforts use a large number of experts, as opposed to a huge number of non-experts, which is what the speakers at the first session were discussing. Pombase (whose title drew some chuckles from the French speakers at my table), the Tetrahymna Genome Database Wiki and the Gene Wiki were presented. The Gene Wiki, presented by Andrew Su from TSRI is a bona-fide crowdsourcing approach, not just Wikipedia-like but actually comprised of a set of 10,000 gene definition stubs folded into Wikipedia. Jennifer Harrow from Sanger presented a poster with an accession model of annotations: the “blessed annotator” who has been trained for 3 months and has the run of the wiki, and the “gatekeeper”, who has been trained in a 2-day workshop, and whose contributions need to be monitored. Lots of talks about trusted annotators, etc. Perhaps we should look to cryptography’s “circles of trust” to enable trusted annotations yet increase the number of curators. (I use “curation” and “annotation” interchangeably throughout.)

An afternoon workshop, discussed who are biocurators. If you are a biocurator, there’s a good probability you are 31-50 years young (80%), female (60%), with a PhD (76%), been through the academic mill and found it to be a bad fit for one reason or the other. You like your work, you rarely burn out, it is challenging and stimulating, you are not in it for the money. (Few people in non-industry science are.) Actually, since non-profit science is run on soft money, funding is a serious concern, and your job may have a shorter half-life that you would care for it to have, as you are probably employed on a 3-5 year contract. Your boss is rarely a biocurator her/himself, which may mean that your job description may sometimes be ill-defined.

After that, there was a whole session devoted to curation workflows and tools. If you are setting up your own genomic database, check these out: WebApollo, CvManGO and the Reactome. Attila Csordas from EBI presented PRIDE, a tool for curating proteomic data. While proteomic data are growing, there are few choices of software tools to annotate them. So PRIDE is a welcome player in the field.

Day 2 had a “Genomics, metagenomics comparative genomics” session, only without the metagenomics. 🙁 What I really liked was the ViralZone resource for viral genomes, out of SIB. High time someone did this for the most abundant biological particle on Earth, and the one responsible for most diversity in life.

The breakout sessions were my favorite, getting a change to interact with like-minded people interested in similar questions. (That is, those that share my prejudices.) I went to the one organized by Marc Robinson-Rechavi and Frederic Bastian which dealt with the question of quality in gene annotation. Here is the problem: when we annotate a gene with a function (or functions), we also need to say what is the evidence that brought us to think that this gene does what it does. The most popular vocabulary for annotating genes is the Gene Ontology or GO. GO provides us with evidence codes which allow the curator to say what is the evidence for the function they assign to a gene. Those range from experimental evidence codes such as “inferred from mutant phenotype” which are always entered by a human curator, to “Inferred from Electronic Annotation” which have no human oversight. These evidence codes are used as a proxy for quality: people generally tend to accept that evidence from an experiment may be stronger evidence that that gene does what it does than an electronic one. That may not necessarily be true. For example, high-throughput experiments that results in many genes getting assigned with annotations wholesale. Even with the uncharacteristically low) 5% error rate, a single paper used as a source from which 5,000 genes are annotated would result in 25 wrongly annotated genes. In addition, these types of experiments supply annotations that are not very specific, such as “protein binding” or “embryonic development”, terms that in many cases are too general to be useful. On the other hand, Nives Škunca of ETH Zurich has shown a beautiful study about how fully automated annotations may not be as inferior to human-curated ones as most people think, with some caveats. (Note: Nives also showed her work in a poster that won the best poster award at the meeting, and this work has just been accepted to PLoS Computational Biology. I will try to blog more about it once it’s published, it’s really brilliant.) The discussion revolved around how we should ascertain the quality of annotations, what would be considered a useful annotation, and how can we establish trustworthiness. Seems like there is quite a bit of work to be done, as people are only beginning to realize that this is a more complex problem than we thought. A major player in this will be the Evidence Ontology or ECO, an elaborate ontology in the making describing lines of evidence for gene annotation.

Day 3: Atilla Csordas, whom I mentioned earlier, organized an unconference session early morning. A few of us gave brief talks there. Ben Good from Andrew Su’s lab talked about biocuration through games, with harnessing The idea is to do for biocuration what fold.it has done for protein folding. The Dizeez game quizzes you about diseases related to genes, and scores you according to how well you link genes to diseases. But as Andrew says on his blog:

Generally, the gene-disease links in structured databases will be reasonably correct (though likely not at all complete). When we analyze the game logs in aggregate, we expect that players’ answers will generally reinforce what’s already known. But given enough game player data, also expect that we’ll see multiple instances of gene-disease links that aren’t reflected in current annotation databases. And these are candidate novel annotations.

So there may be something there, although it is not the “wisdom of the crowds” that is being exploited, since I imagine that only people with advanced degrees in their field can contribute to Dizeez. You can see games from the Su lab on genegames.org. Sean Mooney from Buck talked about the Statistical Tracking of Ontological Phrases (STOP) project. The idea here is to automatically enrich GO annotation of genes with other ontologies, to get a more comprehensive description of their function, especially when it comes to disease. I talked about the Critical Assessment of Function Annotations (we finally submitted the paper, yay!). Atilla talked about annotating proteomic data.

Great meeting. A big thank you to the organizers, it went without a hitch. Logistics, food, coffee were all fantastic. Looking forward to Cambridge nest year! EDIT: a virtual special issue of Database has been published for this meeting, Some of the talks are there as papers. Open Access, of course.

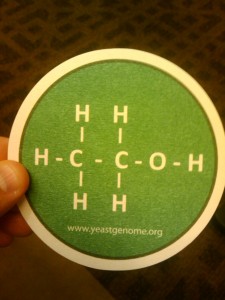

Finally, my favorite promotional item from the meeting:

Hi Iddo, Great to meet you at biocuration and thanks for summing things up here. For some reason genegames.org is heading straight to the dizeez game right now – should be going to what you see at genegames.com which includes the somewhat more advanced and more fun GenESP.

Where I’m sitting both URLS lead to the page featuring GenESP and Dizeez. Check your routing tables, if you are at TSRI now.

Thanks for the post. I always picture the Biocuration meeting as a bunch of people sitting around arguing about how to define protein-coding gene boundaries. After reading your post it does sound moderately more interesting than that.

The Wikipedia annotation model is most definitely alive and well. You may be confusing it with the closed-wiki model which seems to have limited success. I’ve summarised some of the successes Rfam has had with this approach in a recent editorial I wrote. And I believe Pfam is generating even more impressive results with their trial.