Group review of papers?

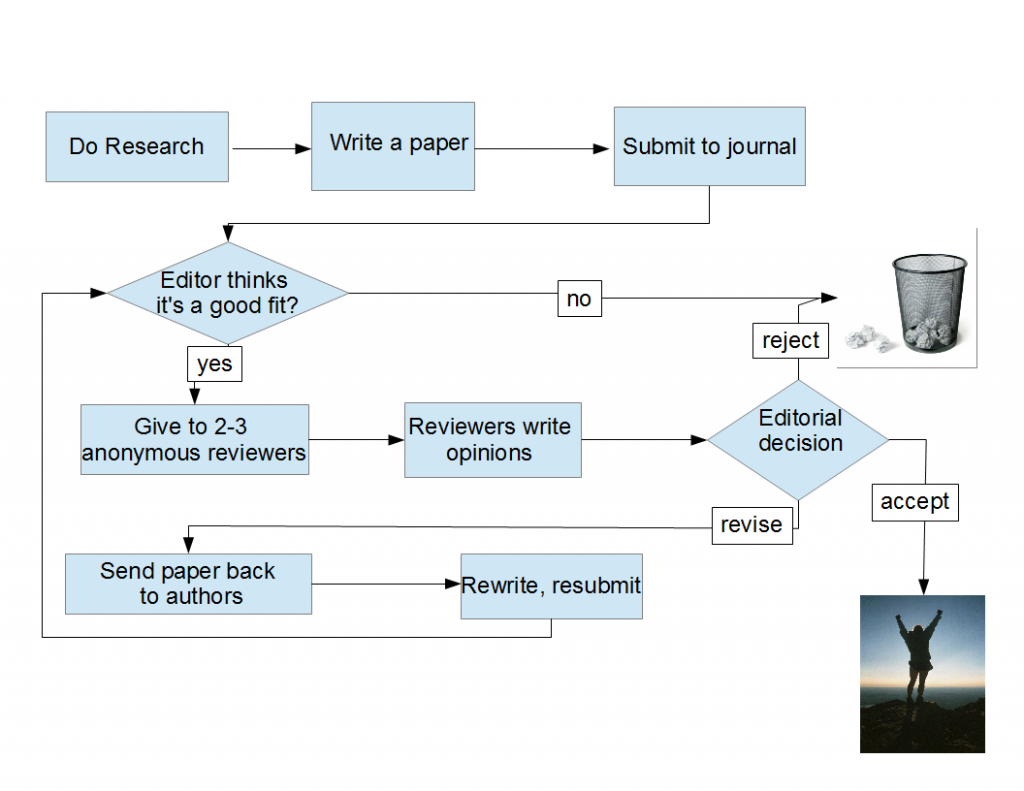

So I’ve been catching up on my paper reviewing duties this weekend. To those outside the Ivory Outhouse, “reviewing a paper” means “anonymously criticizing a research article considered for publication in a scientific journal”. (For those of you familiar with the process, you can jump to the text after the first figure.) Here’s how science gets published: a group of scientists do research. They write up their findings as an article (typically called a “paper”), and submit it to a journal. An editor, who hopefully knows his/her business, considers whether the paper is a good fit for this journal. Is the topic of the paper typical of what this journal publishes? Are the findings interesting and exciting enough? (The words novel and impactful get tossed around a lot at this stage). Is it well-written? Does it conform to journal standards of article length and format? Different journals have different thresholds for these criteria, with editorial rejection rates anywhere between 5 and 80% of submitted papers.

Once a paper passes the editor, it is sent to 2-3 reviewers (sometimes called “referees”). These are (hopefully) experts in the field. The reviews are usually anonymous, to allow the reviewers to express their opinions without fearing retribution — this is especially important when little fish like me get to review papers authored by the bigger fish.

The reviewers read the paper, and critique it (“review” it). A reviewer is expected to write up a considered opinion of the paper, whether the science is sound, interesting and exciting (again novel, impactful). If the paper has errors, can they be fixed and can the paper still be published? Each reviewer typically returns the review with one of four recommendations: reject, major revision, minor revision, accept. “Reject” means that the paper is a bad fit for the journal, either because the science is bad, not interesting enough, not new & exciting enough. “Major revision” would typically mean more work is needed: either more experiments or more data analysis or both. The reviewer considers the paper to be interesting, but not quite up to the scientific standards expected, and more work and another round of reviews are needed. “Minor revision” is great news: this means that the errors are minor, can be easily fixed, and the paper is generally good. Then there is the “accept” recommendation, which personally I have never seen, not as an editor, not as an author and not as a reviewer. At least, not in the first round of reviews.

The editor reads the reviews, reads the paper and makes and editorial decision. There may be a second round of reviews if the editor decides that the authors can get to revise their papers. Here is the typical flowchart:

All this preamble was just to set the stage to a problem I am having, and I suspect that others are having too. You see, when I review a paper, I sometimes have the following experience:

In a previous post I have written that:

As a referee, I see myself more as a midwife (or whatever is the male counterpart) than a gatekeeper. I am not interested so much in keeping bad papers out (that is actually fairly easy), but letting good science in, even when it presents itself feet first and covered in gunk (OK, that was a rotten analogy, but you know what I mean). Anything that can ease this process is more than welcome.

In that post I advocated that the reviewer would be able to communicate anonymously with the authors to clear up points that are unclear in the paper. This idea got some positive responses, (although I have not heard any journal is seriously considering this idea) Edit: Frontiers Journals have something like this in place, see below. Technically, anonymous communication between authors & reviewers should not be hard to facilitate via a journal’s website. However, another idea, less uncomfortable and even more easy to implement would be to review by committee: the reviewers are indeed anonymous to the author, but I do not see a reason they should not be known to each other. What if the editor gave the reviewers their contact information, so that they can discuss unclear points in the journal, and perhaps write up a group decision? If they disagree (as typically happens, and is good) two or three separate opinions can be submitted to the editor. In this way, the paper may have a better chance not to suffer from rejection due to a misunderstanding.

This is actually how grant proposals are typically reviewed. Only for grant proposals, there are typically 20 reviewers, and all the reviewers get together in a room, which can be expensive (flying everyone over to the discussion panel’s location, housing & feeding them). But when you only have 2-3 people reviewing a paper, they can easily communicate via conference calls and email, discuss the paper and help each other understand the salient and problematic points in the paper & hopefully write more informed reviews.

Any takers?

EDIT: Seems like the Frontiers journals are already using a similar model, only that the reviewers are revealed at the end of the process. Thanks to Casey Bergman for pointing that out.

Frontiers full reviews are made up of two consecutive steps, an independent and an interactive review. In the independent review phase, review editors evaluate independently from each other whether the research is academically sound following a standardized review questionnaire. Then, Frontiers implemented for the first time the real-time Frontiers Interactive Review Forum, in which authors and review editors collaborate online via a discussion forum until convergence of the review is reached.

I’m not sure about the commitee idea; the whole advantage of having separate reviewers is that they don’t need to influence each other, and that way if one of them spots a real gem in the paper they can point it out, and similarly if there is one of the reviewers who is biased against it for some reason (e.g. doesn’t get it, or doesn’t fit into his view of how things should happen) then they don’t get to influence the others.

The obvious way would be an anonymous set of questions via the editor or the like.

Too much danger of groupthink. I much prefer having independent reviews, then sharing the reviews with the other reviewer. Most of the time, there are a couple major points that everyone picks up, but each individual reviewer picks up something the others miss. I’d not want to be a referee on a committee, nor would i want my papers reviewed by committee.

Kevin, I agree that groupthink is a danger. Perhaps it is best to have individual written reports written first, as you suggested and only then a joint discussion. It can be as simple as sharing reviews prior to the submission to the editor. A bit like in an NIH panel, where grants are first scored individually, then discussed, and then rescored. I still think that a group can produce insights that three indivduals working alone cannot. I understand the word”committee” carries negative connotations, but should the review process be structured to avoid the groupthink trap, perhaps it will work. When refereeing a paper, I sometimes find it quite frustrating I cannot discuss it with others.